According to Jules Verne in Master of the World:

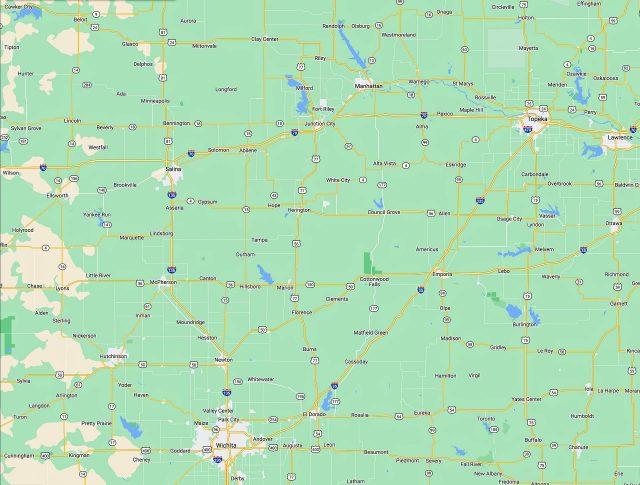

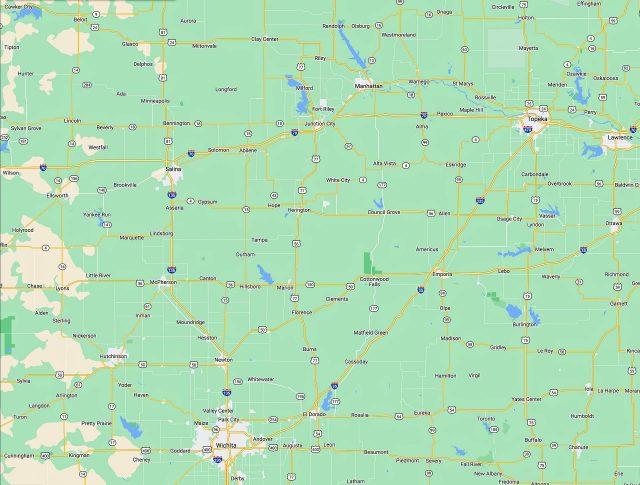

“Lake Kirdall in Kansas, forty miles west of Topeka, is little known. It deserves wider knowledge, and doubtless will have it hereafter, for attention is now drawn to it in a very remarkable way.

“This lake, deep among the mountains, appears to have no outlet. What it loses by evaporation, it regains from the little neighboring streamlets and the heavy rains.

“Lake Kirdall covers about seventy-five square miles, and its level is but slightly below that of the heights which surround it. Shut in among the mountains, it can be reached only by narrow and rocky gorges. Several villages, however, have spring up upon its banks. It is full of fish, and fishing-boats cover its waters.

“Lake Kirdall is in many places fifty feet deep close to the shore. Sharp, pointed rocks form the edges of this huge basin. Its surges, roused by high winds, beat upon its banks with fury, and the houses near at hand are often deluged with spray as if if with the downpour of a hurricane. The lake, already deep at the edge, becomes yet deeper toward the center, where in some places sounding show over three hundred feet of water.

“The fishing industry supports a population of several thousands, and there are several hundred fishing boats in addition the the dozen or so of little steamers which serve the traffic of the lake. Beyond the circle of the mountains lie the railroads which transport the products of the fishing industry throughout Kansas and the neighboring states….”

If Verne’s account is accurate, Lake Kirdall would be in the Manhattan/Fort Riley area. I’ve lived in Kansas the larger portion of my life, and I’ve never noticed any mountains in all my wanderings around the state, let alone mountain lakes. Evidently French science-fiction writers know as much about the plains states as Japanese blues bands do about the deep South.

Joseph Moore mentioned the lake in his review of Master of the World, cited by John C. Wright in his list of “The Fifty Essential Authors of Science Fiction.” I hadn’t read Verne since grade school and was curious, so I tracked the book down. It was okay, but just okay. I’m surprised that Wright included it in his note on Verne. It may be that I expected the wrong things from it, or that I need to have read more of Verne to fully appreciate it. Brandon Watson gives it a recommendation, along with a couple of related novels.

The greatest mystery to me is, why did Verne invent a lake in a spot where two minutes with an atlas would have told him that it couldn’t exist when there was the quite real and remarkable Crater Lake in Oregon available?

***

David Breitenbeck:

Among its many other marks, one sign that the American education system is a complete fraud is the fact that English classes never present H.P. Lovecraft, Raymond Chandler, R.E. Howard, Walter B. Gibson, or the like as examples of American Literature for students to read.